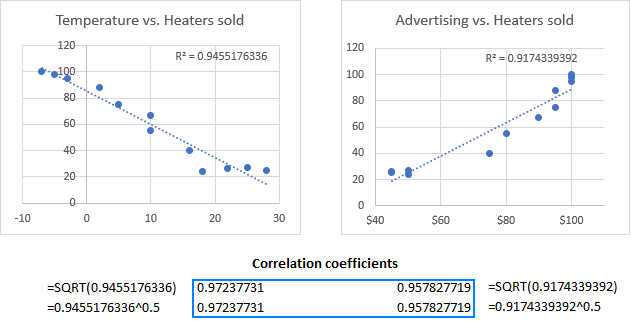

The Pearson’s correlation coefficient must be interpreted with care. We saw this in the chapter when we plotted atmospheric pressure against wind speed. It is also quite close to -1, indicating that this association is very strong. This is negative, indicating wind speed tends to decline with increasing pressure. For example, the Pearson correlation coefficient between pressure and wind is given by:Ĭor(storms$wind, storms$pressure) # -0.9254911 In R we can use the cor function to calculate Pearson’s correlation coefficient. The words ‘high’ and ‘low’ are relative to the arithmetic mean. A negative value indicates that high values of one variable is associated with low values of the second. A positive value indicates that high values in one variable is associated with high values of the second. ‘Perfectly related’ means we can predict the exact value of one variable given knowledge of the other. Pearson’s correlation coefficient takes a value of 0 if two variables are uncorrelated, and a value of +1 or -1 if they are perfectly related. In the case of Pearson’s correlation coefficient, the coefficient is designed to summarise the strength of a linear (i.e. Remember, a correlation coefficient quantifies the degree to which an association tends to a certain pattern. R_\) are the sample means, and \(N\) is the sample size. The mathematical formula for the Pearson’s correlation coefficient applied to a sample is: \[ Pearson’s correlation coefficient is something called the covariance of the two variables, divided by the product of their standard deviations. The most widely used measure of correlation is Pearson’s correlation coefficient (also called the Pearson product-moment correlation coefficient). Strictly speaking correlation has a narrower definition: a correlation is defined by a metric (the ‘correlation coefficient’) that quantifies the degree to which an association tends to a certain pattern. The terms ‘association’ and ‘correlation’ are closely related so much so that they are often used interchangeably. The common measures seek to calculate some kind of correlation coefficient. Statisticians have devised various different ways to quantify an association between two numeric variables in a sample. 22.3.3 Alternatives to box and whiskers plots.22.3 Categorical-numerical relationships.22.2 Relationships between categorical variables.22.1 Relationships between numeric variables.22 Exploring relationships between two variables.21.2 Graphical summaries of categorical variables.21.1 Understanding categorical variables.19.2 Working with layer specific position adjustments.19.1.1 The relationship between aesthetics and geom properties.19.1 Working with layer specific geom properties.18.3 Increasing the information density.18.2.2 The standard way of using ggplot2.17.3 Populations, samples and distributions.17.2.1 Numeric vs. categorical variables.15.1 Summarising variables with summarise.13.3 Reording observations with arrange.12.3.1 Transforming and dropping variables.12.2.1 Renaming variables with select and rename.10.5 Importing data with RStudio (Avoid this!).9.4.2 Adding a variable to a data frame.9.4.1 Extracting and adding a single variable.

4.6.2 Generating repeated sequences of numbers.3.4 Functions do not have “side effects”.3.3 Evaluating arguments and returning results.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed